LazySets.jl

LazySets.jl is a Julia package for calculus with convex sets.

The aim is to provide a scalable library for solving complex set-based problems, such as those encountered in differential inclusions or reachability analysis techniques in the domain of formal verification. Typically, one is confronted with a set-based recurrence with a given initial set and/or input sets, and for visualization purposes the final result has to be obtained through an adequate projection onto low-dimensions. This library implements types to construct set formulas and methods to efficiently and accurately approximate the projection in low-dimensions.

Introduction

In this package we describe convex sets lazily (i.e., symbolically). This way we provide an exact but abstract representation, in principle for any common convex set class or operation between sets. Concrete information is obtained through evaluating the set in specific directions. More precisely, each concrete subtype $\mathcal{X}$ of the abstract type LazySet exports a method to calculate its support vector $σ(d, \mathcal{X})$ in a given (arbitrary) direction $d ∈ ℝ^n$. Representing sets exactly but lazily has the advantage of being able to perform only the required operations on-demand.

For very long sequences of computations (e.g., set-based recurrences with tens of thousands of elements), it is useful to combine both lazy and concrete representations such as polyhedral approximations. All this is easy to do with LazySets. Moreover, we provide a specialized module for handling Cartesian decomposition of two-dimensional projections. The projection can be taken to the desired precision using an iterative refinement method.

Example

Let $\mathcal{X}_0 \subset ℝ^{1000}$ be the Euclidean ball of center $(1, …, 1)$ and radius $0.1$ in dimension $n=1000$. Given a real matrix $A ∈ ℝ^{1000 × 1000}$, suppose that we are interested in the equation

$\mathcal{Y} = CH(e^{A δ} \mathcal{X}_0 ⊕ δ B\mathcal{U}, \mathcal{X}_0),$

where $CH$ is the convex hull operator, $⊕$ denotes Minkowski sum, $\mathcal{U}$ is a ball in the infinity norm centered at zero and radius $1.2$, and $B$ is a linear map of the appropriate dimensions. This equation typically arises in the study of discrete approximation models for reachability of continuous systems, see for example Frehse et al. [FGD+11].

For concreteness, we take $A$ to be a random matrix with probability $1\%$ of any entry being nonzero. Suppose that the input set $\mathcal{U}$ is two-dimensional, and that the linear map $B$ is random. Finally, let δ = 0.1. Using LazySets, we can define this problem as follows:

julia> using LazySets, SparseArrays

julia> A = sprandn(1000, 1000, 0.01);

julia> δ = 0.1;

julia> X0 = Ball2(ones(1000), 0.1);

julia> B = randn(1000, 2);

julia> U = BallInf(zeros(2), 1.2);The @time macro shows that building $\mathcal{Y}$ with LazySets is instantaneous.

julia> Y = CH(SparseMatrixExp(A * δ) * X0 + δ * B * U, X0);By asking for the (parameter-free) type of Y, we see that it is a lazy convex hull:

julia> LazySets.basetype(Y)

ConvexHullThe full type includes type parameters for the types of the arguments:

julia> typeof(Y)

ConvexHull{Float64, MinkowskiSum{Float64, ExponentialMap{Float64, Ball2{Float64, Vector{Float64}}, SparseMatrixExp{Float64, SparseMatrixCSC{Float64, Int64}}}, LinearMap{Float64, BallInf{Float64, Vector{Float64}}, Float64, Matrix{Float64}}}, Ball2{Float64, Vector{Float64}}}Now suppose that we are interested in observing the projection of $\mathcal{Y}$ onto the variables number 1 and 500. First we define the $2×1000$ projection matrix and apply it to $\mathcal{Y}$ as a linear map (i.e., from the left). Second, we use the overapproximate method:

julia> proj_mat = [[1. zeros(1, 999)]; [zeros(1, 499) 1. zeros(1, 500)]];

julia> res = Approximations.overapproximate(proj_mat * Y);We have calculated a box overapproximation of the exact projection onto the $(x_1, x_{500})$ plane. Notice that it takes about 0.064 seconds for the whole operation, allocating less than 10MB of RAM. Let us note that if the set operations were done explicitly, this would be much (!) slower. For instance, already the explicit computation of the matrix exponential would have cost 10x more, and allocated around 300MB. For even higher $n$, an evaluation will probably run out of RAM. But this is doable with LazySets because the action of the matrix exponential on the set is only evaluated along the directions of interest. Similar comments apply to the Minkowski sum above.

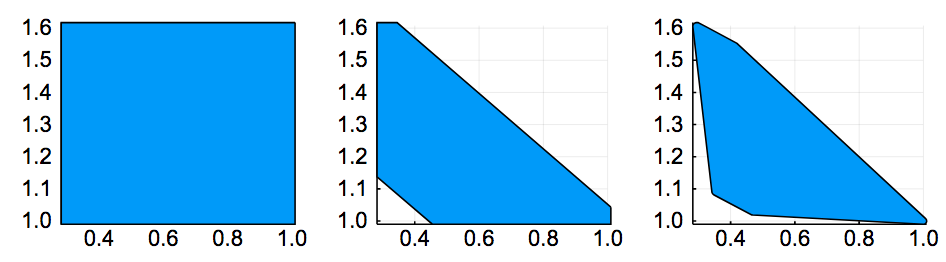

We can visualize the result using plot, as shown below (left-most plot).

In the second and third plots, we have used a refined method that allows to specify a prescribed accuracy for the projection (in terms of the Hausdorff distance). It can be passed as a second argument to overapproximate. For the theoretical background, see the section on Polyhedral Approximations.

| Error tol. | time (s) | memory (MB) |

|---|---|---|

| ∞ (no refinement) | 0.022 | 5.27 |

| 1e-1 | 0.051 | 7.91 |

| 1e-3 | 0.17 | 30.3 |

This table shows the runtime and memory consumption for different error tolerances, and the results are shown in three plots of above, from left to right. When passing to a smaller tolerance, the corners connecting edges are more "rounded", at the expense of computational resources, since more support vectors have to be evaluated.

Features

The core functionality of LazySets is:

- Lazy (i.e., symbolic) types for several classes of convex sets such as balls in different norms, polygons in constraint or vertex representation, zonotopes, special types such as lines and linear constraints, hyperrectangles, and high-dimensional polyhedra.

- Lazy implementations for most commonly used set operations, e.g., Minkowski sum, Cartesian product, convex hull and interval hull approximations, and linear and exponential maps.

On top of the previous basic type representations and operations, LazySets can be used to:

- Efficiently evaluate the support vector of nested lazy sets.

- Cartesian decomposition of lazy sets using two-dimensional projections.

- Fast overapproximation of an exact set using a polyhedral approximation, to the desired accuracy.

- Extensive visualization capabilities through the Plots.jl framework.

Manual Outline

- Getting Started

- Polyhedral Approximations

- Decomposing an Affine Map

- Fast 2D LPs

- Iterative Refinement

- Interval Hulls

- Convex Hulls

- A Reachability Algorithm Using Zonotopes

- A Hybrid Reachability Algorithm Using Zonotopes

- Concrete Polyhedra

- Parallel Approximations

- Lazy Intersections

Library Outline

- Hyperrectangle

- Infinity-norm ball (BallInf)

- Interval

- Singleton

- Origin (ZeroSet)

- Half-space (HalfSpace)

- Polyhedron in constraint representation (HPolyhedron)

- Hyperplane

- Line2D

- Line

- Universe

- HParallelotope

- Polytope in constraint representation (HPolytope)

- Polytope in vertex representation (VPolytope)

- Polygon in constraint representation (HPolygon)

- Polygon in optimized constraint representation (HPolygonOpt)

- Polygon in vertex representation (VPolygon)

- Zonotope

- Line segment (LineSegment)

- Manhattan-norm ball (Ball1)

- Empty set (EmptySet)

- Euclidean-norm ball (Ball2)

- Ellipsoid

- p-norm ball (Ballp)

- Polynomial zonotope (DensePolynomialZonotope)

- Bloating

- Cartesian product

- Convex hull

- Intersection

- Minkowski sum

- Linear map (LinearMap)

- Exponential map

- Reset map (ResetMap)

- Translation

- Affine map (AffineMap)

- Symmetric interval hull (SymmetricIntervalHull)

- Union

- Complement

- Rectification

- Conversion between set representations

- Utilities

- Parallel